Why knowledge management matters in 2026 + 5 practical tips

Everyone’s losing their mind over agents, neural nets, and AI coding. In 2026 there’ll be more of it — that’s a given.

My take is simple: value shifts to the person who can frame the problem and control execution. And to do that consistently (not “trust me bro” style), you need a personal knowledge system.

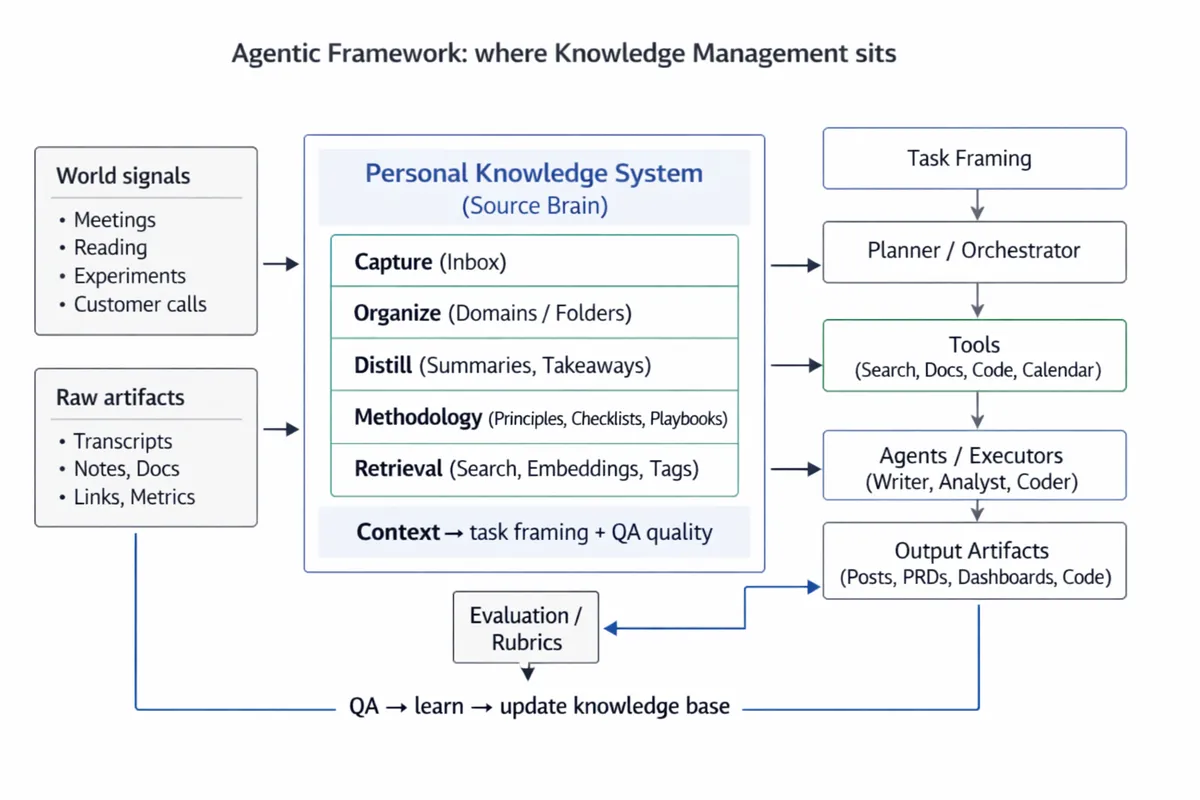

Because once we outsource code and routine white-collar tasks to agents, the core skill stops being “how to do” and becomes task framing + QA of outputs. Without a knowledge base, you get chaos: you’ll steer on gut feeling, argue from authority, and spend your life editing hallucinations.

How to pack a knowledge system into scalable AI products

Consider what happened with two product people who basically turned their brains into software.

First: Lenny Rachitsky → Lennybot. Lenny built Lennybot by feeding an agent transcripts of his podcast episodes, plus metadata and accumulated product context. The outcome is “Lenny v2”: a knowledge engine you can talk to — and a lead magnet into paid services.

Second: Vanya Zamesin → Aura Framework. Vanya packaged his methodology and experience into Aura Framework. The order matters: knowledge system first, then AI tools on top, then a UI for “how you talk to the tools”. The result is a platform-like workflow where agents generate product artifacts ~10x faster using that methodology.

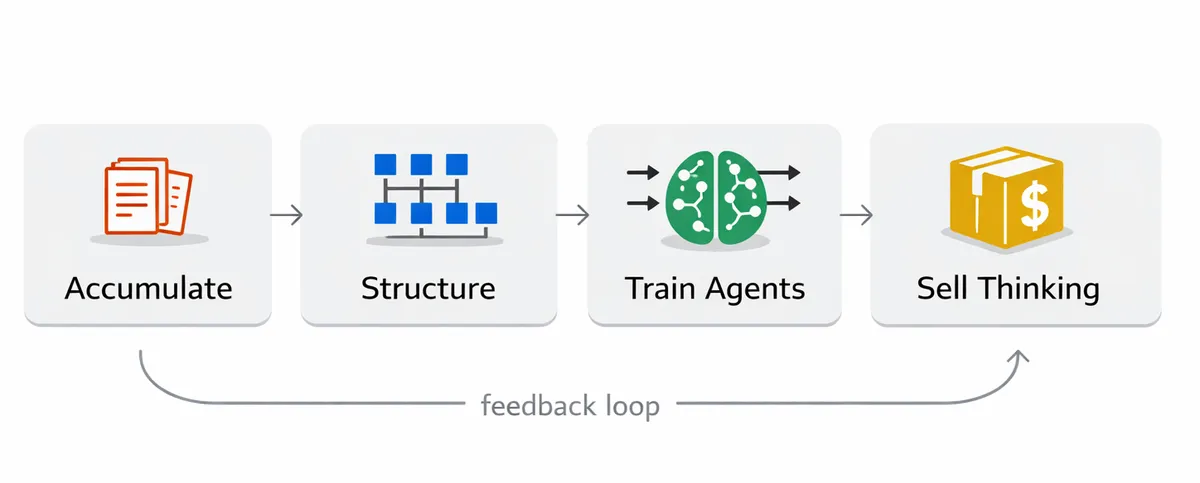

The shared pattern is almost boring in its simplicity: they didn’t “just add AI”. They accumulated and structured knowledge, turned it into a formal methodology, gave agents access and trained them on that methodology, and then sold reproducible thinking as SaaS instead of selling their time.

Why knowledge management is the new superpower

Agents need a “source brain”. Without it, they’ll hallucinate — and you’ll edit forever. Vibe coding is fun, but without context it becomes a slop generator. The more automation we have, the more valuable “what exactly are we doing” becomes (not “how”).

Also: domain knowledge is gold people are ready to pay for. AI is still mostly a strong representation of the web, but real insights come from human experience and interaction with the world. Your knowledge base is basically a collection of observations about what actually works for you — and that’s priceless.

Even the macro trend points there: this HBR piece argues that AI doesn’t reduce work — it intensifies it.

So the winner isn’t the “smartest” person — it’s the one who learns faster, validates rigorously, and continuously feeds a structured knowledge base that powers their AI workforce.

How I do it (my stack)

I treat knowledge like an input pipeline.

Raw inputs first: I store call transcripts in Granola and auto-sort them into folders (therapy / german / personal growth / work projects, etc.). This is my “unfiltered reality”.

Then drafting speed: I dictate drafts through Wispr Flow — fast voice-to-text on desktop and phone, plus it auto-fixes grammar and punctuation. It removes friction, which matters more than people admit.

Then the glue layer: Raycast is where everything connects — global search, prompt management (I wrote about it here), and small automations for turning inputs into notes without making it a whole “project”.

Finally, I keep my source of truth in Notion (or Obsidian). And I’m building a flow for summarizing articles and updating the knowledge base, because the system only works if it keeps absorbing reality.

What excites me about this shift

I think the market is moving toward “knowledge workers with agents” becoming more valuable, not less. Not because they type faster — but because they can deploy judgment at scale.

Big companies already write a meaningful chunk of code with AI (you’ll see ~30% cited around Microsoft/Google/Meta), for example in this Entrepreneur piece and this Outsource Accelerator write-up.

And “AI skills” already correlate with higher pay — see this overview.

Personally, it’s also selfishly perfect: I’ve always loved reading and taking notes. Now it’s win-win — fun for me, and high-quality context (food) for agents.

My advice (if you want to become a god for your agents 😎)

Start boring. Collect knowledge as .md notes and organize it. Keep it simple: pick max 5 folders/domains you care about — think Sherlock’s mind palace vibe.

Use a personal blog as a forcing function: it makes you distill ideas and drop the fluff. Then add agents in a loop: task → QA → write the distilled learning back into the knowledge base. That’s the compounding engine.

What I’m building right now is basically that loop, productized:

- A speaking practice assistant: daily clear-speaking tasks, you record answers, it stores mistakes and gives short recommendations.

- A bot that updates my AI knowledge base — here’s the base: AI knowledge base.

TL;DR

Agents will take the “doing”. You’ll keep: (1) deciding what to do, (2) supplying domain knowledge, (3) verifying outputs.

And the person who has a knowledge system — and the discipline to keep it updated — will be an order of magnitude more effective.